Implementation of basic Hadoop commands

Jan 07, 2020

Hadoop commands,

8803 Views

In This Article, we'll discuss Implementation of basic Hadoop commands

start-all.sh: Used to start Hadoop daemons all at once. Issuing it on the master machine will start the daemons on all the nodes of a

Syntax: start-all.sh

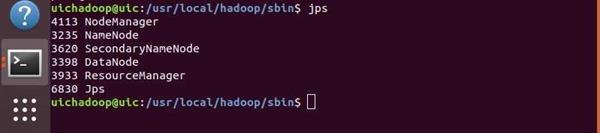

jps(Java Virtual Machine Process Status Tool): JPS is a command is used to check all the Hadoop daemons like NameNode, DataNode, ResourceManager, NodeManager etc. which are running on the machine.

Syntax: jps

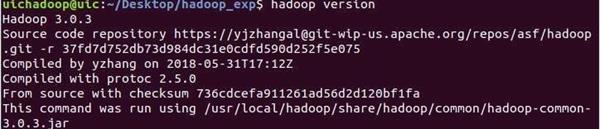

hadoop version: This command is use to check the version of Hadoop in which you are currently working.

Syntax: hadoop version

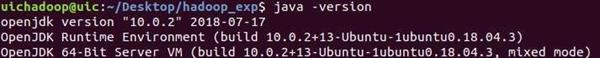

Java -version: This command is use to check the java version in which you are currently

Syntax: java version

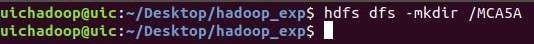

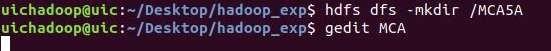

mkdir:This command is use to make directories.

Syntax: hdfs dfs -mkdir [-p] <paths>

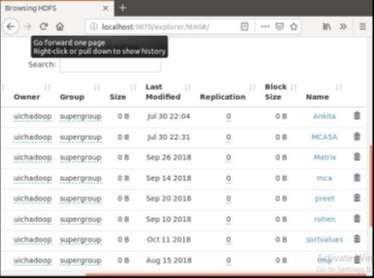

Now to check whether your directory created at the remote location or not you need to go to browser and type

localhost:9870/explorer.html#/

.

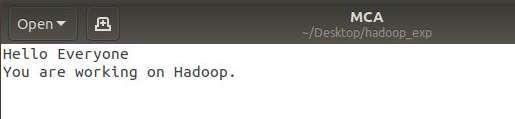

gedit: It is the default text editor of the GNOME desktop environment. One of the neatest features of this program is that it supports tabs, so you can edit multiple

Syntax:

gedit <file_name>

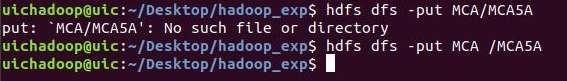

put: This command is used to copy files from the local file system to the HDFS filesystem. . That is this command is use to upload file in directory which is created on remote

Syntax:

hdfs dfs -put <localsrc> <destination>After that you need to check that your file shown at the remote location in that which you created prior.

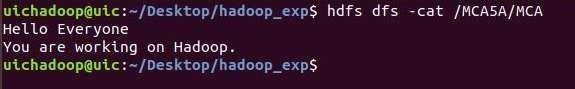

cat: HDFS Command that reads a file on HDFS and prints the content of that file to the standard output.

Syntax: Usage:

hdfs dfs -cat URI [URI ...]

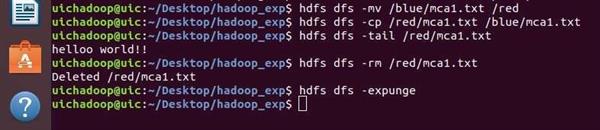

expunge: HDFS Command that makes the trash empty.

Syntax:

hdfs dfs –expunge

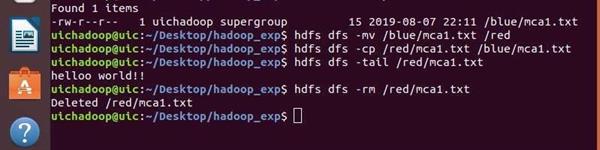

rm: Used to stop hadoop daemons all at once. Issuing it on the master machine will stop the daemons on all the nodes of a cluster.

Syntax:

hdfs dfs -rm /[dirname]/[filename]

cp: This command is use to copy data from one source directory to another.

Syntax:

hdfs dfs -cp [source] [destination]

tail: This command is used to show the last 1KB of the file.

Syntax:

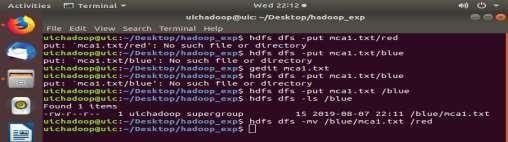

hdfs dfs –touchz /directory/filenamemv: This command is similar to the UNIX mv command, and it is used for moving a file from one directory to another directory within the HDFS file system.

Syntax:

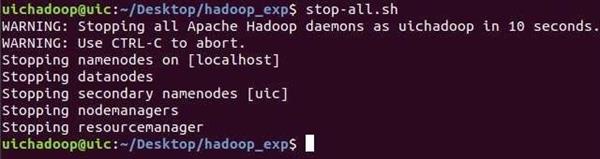

hdfs dfs -mv /[source_dir_name]/[file_name]/[destination_dir name]stop-all.sh: Used to stop hadoop daemons all at once. Issuing it on the master machine will stop the daemons on all the nodes of a cluster.

Syntax:

stop-all.sh